Yesterday was the first real test of our new script debugger at RB2B — and it delivered.

I'd spent time building a tool that lets our AI tap into a dedicated debugging API whenever a user hits an installation issue. The idea was straightforward: instead of a user getting stuck, firing off a support request, and waiting for a human to respond, the AI intercepts the problem in real-time, runs a diagnosis, and walks them through a fix on the spot.

Simple in theory. But you never really know until it's live.

The First 24 Hours

Nine times yesterday, our AI called the debugger API to help users work through installation issues — no ticket, no wait, no escalation. Just a problem surfaced and a problem solved, in the moment.

That might not sound like a huge number, but consider the alternative. The day before this tool existed, those same nine conversations would have played out very differently. The AI would have hit a wall, the user would have gotten frustrated, and at least half of them — conservatively — would have ended up in my inbox.

That's four or five support escalations in a single day. At roughly 20 minutes per resolution, I'm looking at an hour and a half of my time, gone. And that's not counting the mental cost of context switching — jumping out of product work or training prep to troubleshoot someone's environment, then trying to find my place again afterward.

The hidden tax of support work isn't just the time it takes. It's everything that gets interrupted around it.

The Part I Didn't Expect

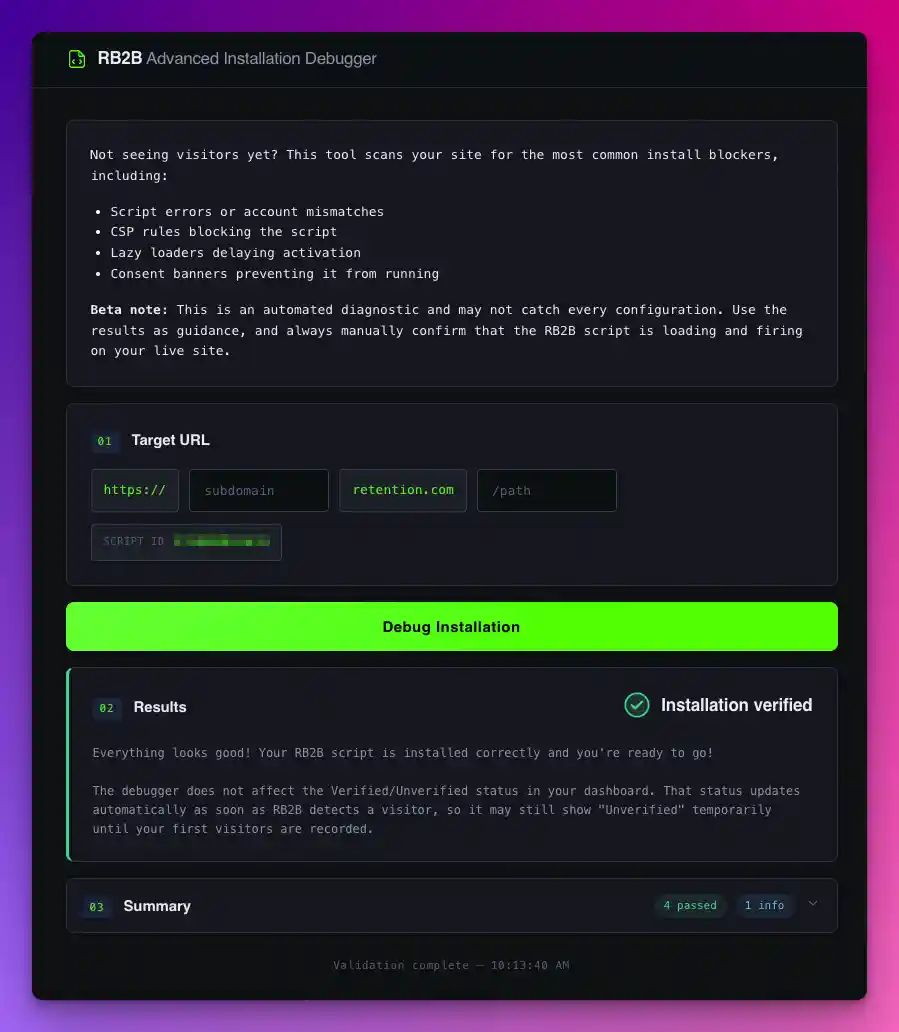

Here's what surprised me most: 16 users didn't wait for the AI at all.

They spotted the debugger tool right in their dashboards, ran it themselves, and solved their own problems — completely independently. No AI, no support, no me. Just a user, a tool, and a resolved issue.

That's the version of self-serve that actually works. Not a help doc buried three clicks deep, but a diagnostic tool surfaced exactly where someone needs it, exactly when they need it.

What This Actually Changes

There's a version of support where everything flows through a person. Users wait, the person context-switches, answers get written, tickets get closed. It works — but it doesn't scale, and it burns out whoever's at the center of it.

What I'm building toward is something different: a system where the AI handles what it can, users handle what they can, and human attention is reserved for the problems that genuinely need it.

One day in, and I'm already seeing that take shape. Fewer interruptions for me. Faster answers for users. And a growing sense that the hardest installation issues — the ones that used to feel like they could only be solved by someone who'd seen everything — might not need a human at the center after all.

I'm one day in. I'm curious to see where this goes.