Everyone knows AI agents need ongoing training. Most teams just don't do it.

The concept of continuous improvement isn't new. But somewhere between deployment and day-to-day operations, the feedback loop gets deprioritized. The agent goes live, attention moves to the next thing, and the system plateaus at whatever level it launched at.

That's the "set it and forget it" trap. And that's where most of the value gets left behind.

Real conversations are your best training data

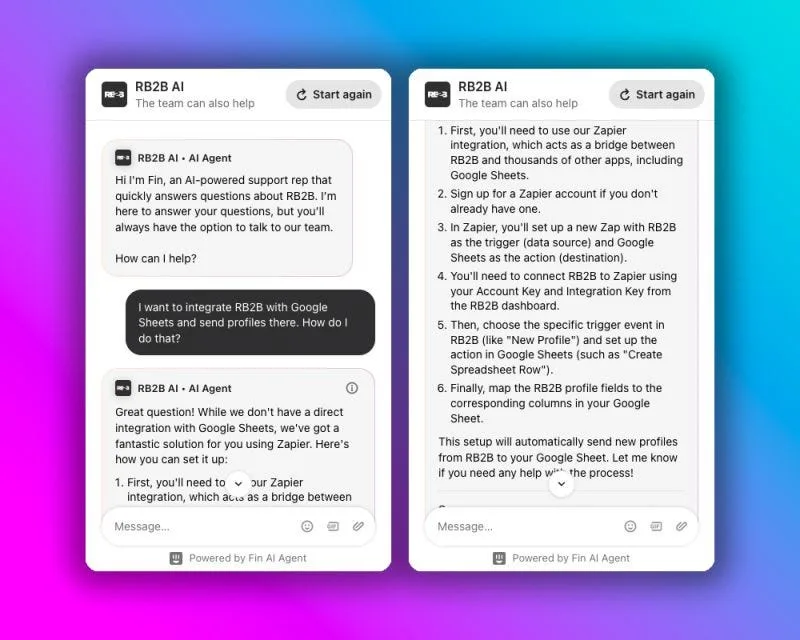

I was reviewing a conversation between our AI sales agent and a prospect this week. The prospect asked a reasonable question. The agent gave a technically correct but incomplete answer — missing the nuance that would have actually moved the conversation forward.

So I stopped what I was doing, opened our knowledge base, and wrote a full internal article on that topic. Not a quick patch. A thorough treatment of the concept, with all the edge cases and context the agent needed to handle it well.

Then I went back and replayed the conversation — same messages, same flow — to verify the agent could now answer it properly.

That whole loop took maybe 30 minutes.

Why this compounds

The reason this matters isn't the individual fix. It's the compounding effect.

Every real conversation your agent has is a signal. A gap in the agent's knowledge isn't a failure — it's a data point. The practitioners who treat it that way end up with agents that get meaningfully better over weeks and months. The ones who don't end up with agents that plateau.

Traditional software doesn't work like this. You ship a feature, it behaves the same way forever until you change the code. AI agents are different. The "code" is the knowledge and context you give them, and that knowledge can be continuously refined in response to actual usage.

That's a fundamentally different — and more powerful — model. But only if you build the operational habits to take advantage of it.

The loop itself is simple

Review real conversations. Identify where the agent fell short. Write the knowledge that would have filled the gap. Test with the original inputs. Repeat.

Review → Update → Test → Repeat.

The simplicity is the point. This doesn't require a data science team or a fine-tuning pipeline. Just someone who cares enough to close the loop, and the discipline to do it consistently.

What most teams miss

When an AI agent underperforms, the instinct is to look at the model, the prompt, or the tooling. Those things matter. But the most common culprit is much more mundane: the agent didn't have the information it needed.

You can't expect an agent to answer questions well about topics you've never written down. The knowledge base isn't a one-time setup task — it's a living document that gets better every time you pay attention to a real conversation.

The teams that figure this out early end up with a genuine advantage. Their agents improve faster, handle edge cases better, and require less human escalation over time. Not because they picked a better model, but because they built a better feedback loop.

The flywheel is the product

If you're running AI agents at any meaningful scale, the flywheel isn't a nice-to-have. It is the product. The agent you deploy on day one is just the starting point. What it becomes over the next six months depends entirely on whether you've built the operational habit of closing the loop.

Start small. Review one conversation today. Write one article. Test it. That's the whole thing.